Proactive AI Safety: Predicting Model Failure via Drift Simulation

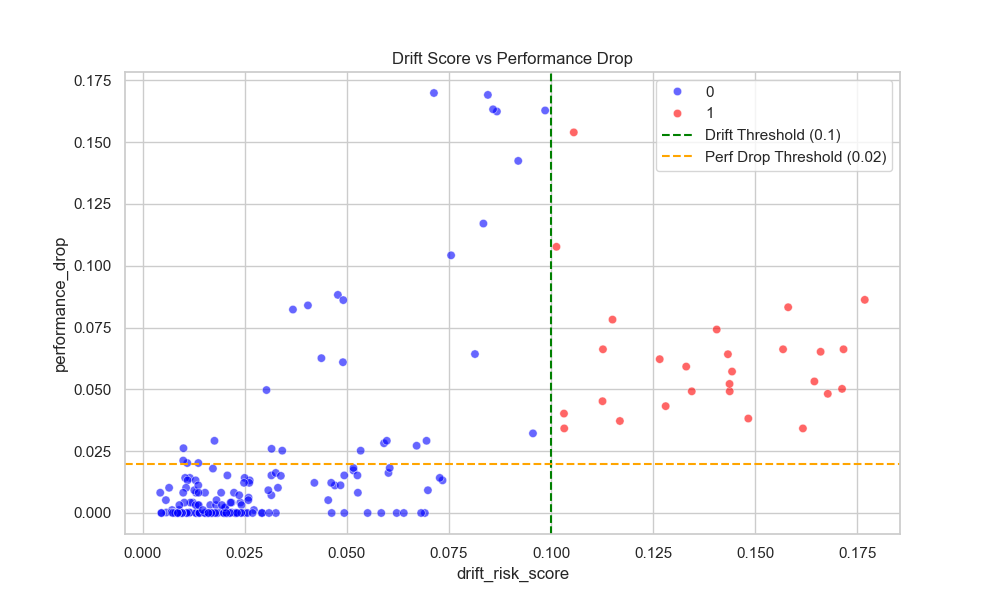

In production machine learning systems, data distribution shift (drift) is a primary cause of silent model performance degradation. Traditional monitoring systems are reactive, alerting purely on statistical distance (e.g., PSI > 0.2) or waiting for lagged ground truth labels to calculate performance drops. Both approaches are suboptimal: the former suffers from "alert fatigue" (false alarms), and the latter is too slow to prevent business loss.

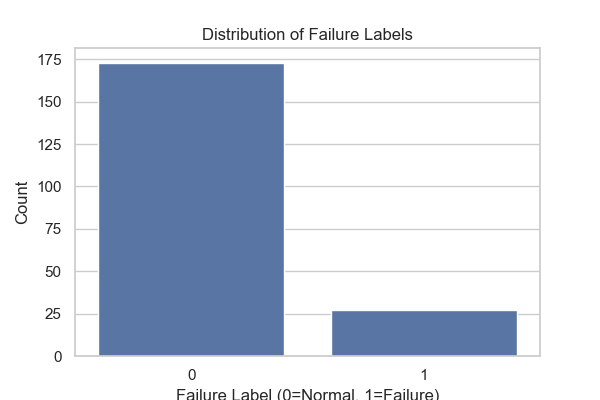

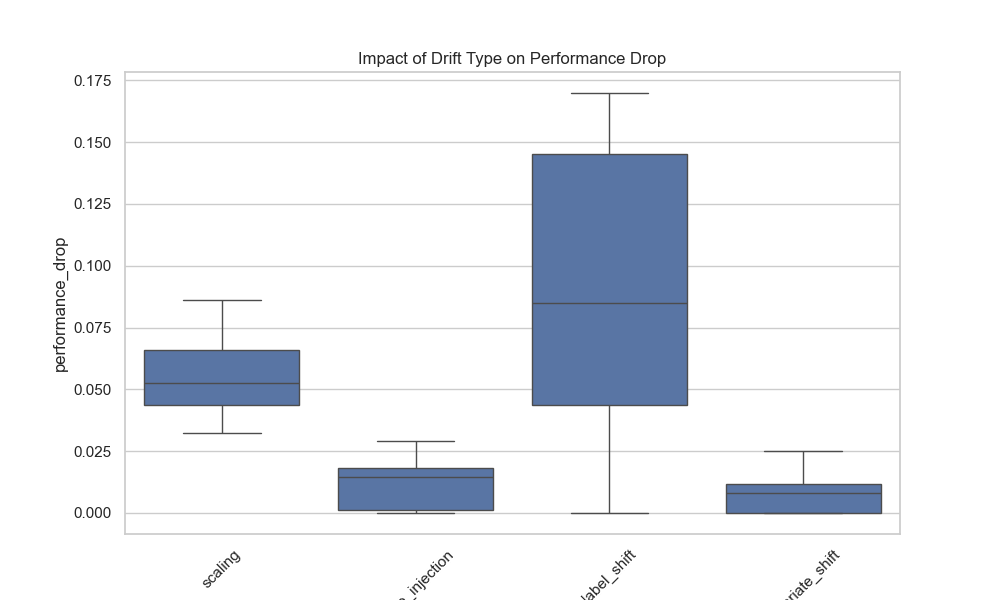

This project implements a proactive safety system that predicts "Model Failure" in advance. By treating failure prediction as a supervised learning problem, we train a secondary Meta-Model that inputs drift metrics and predicts the probability of significant performance degradation. We simulate various drift scenarios to create a labeled dataset for this meta-learner, moving from heuristic-based alerting to model-based safety.

Github link: https://github.com/aditya0589/Early-Model-Failure-Drift-Impact-Prediction

This project implements a proactive safety system that predicts "Model Failure" in advance. By treating failure prediction as a supervised learning problem, we train a secondary Meta-Model that inputs drift metrics and predicts the probability of significant performance degradation. We simulate various drift scenarios to create a labeled dataset for this meta-learner, moving from heuristic-based alerting to model-based safety.

Github link: https://github.com/aditya0589/Early-Model-Failure-Drift-Impact-Prediction

Gallery